Never before in history have we had so many tools and channels we could use to deliver research and evaluation insights directly to people who could actually use them. And yet, most organizations still rely on outdated and ineffective dissemination strategies.

Now it’s easy to bash the organizations, but the truth is that our research and evaluation frameworks rarely do more than pay lip service to dissemination and reporting.

We can do better. This Theory of Use is my attempt to fill the gap.

In this post:

- Starting with Insights not Reports

- Building a Simple Theory of Use (Insight, Audience, Outcome)

- Step 1. Identify Your Insights

- Step 2. Consider Your Audiences

- Step 3. Determine Your Target Outcomes

- Step 4. Putting the Theory of Use pieces together.

- Step 5. Turning your Theory of Use into a Dissemination Plan

Starting with Insights, not Reports.

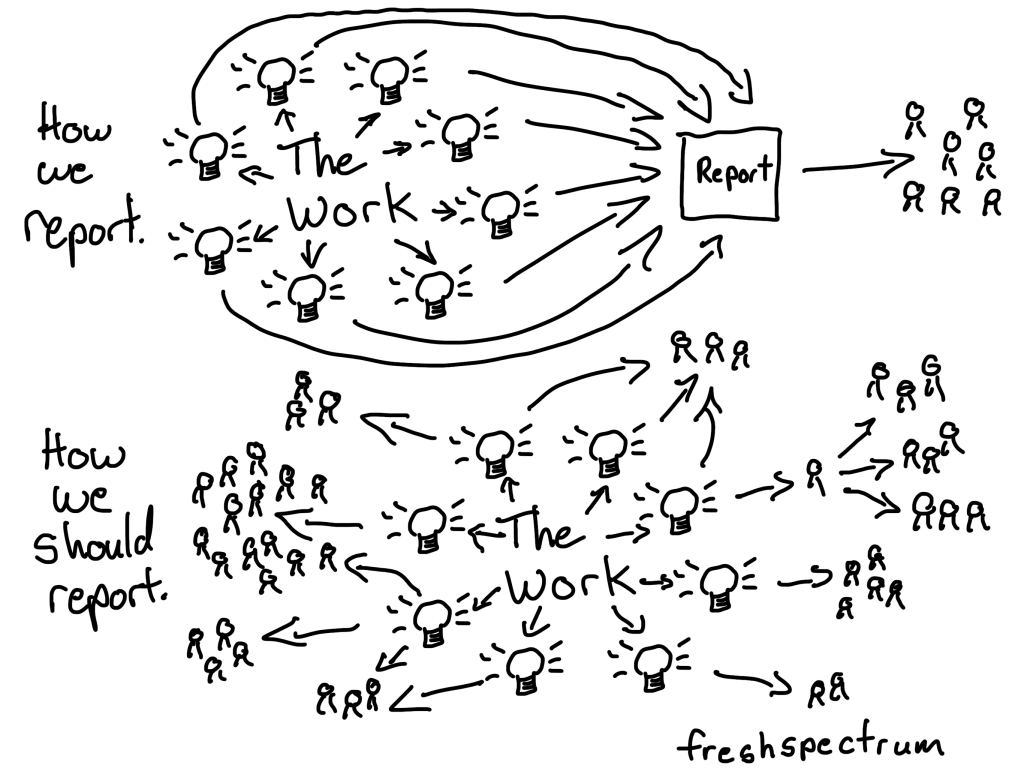

In research and evaluation we tend to treat reports as if they are the singular end-products of our work. A product that packages all of our questions, methods, findings, and recommendations together so that they can then be distributed.

In other words, the report has become a proxy for our work.

You’ll see this in all sorts of textbooks, guides, and frameworks. The report is the culmination of our efforts, and the thing to be shared.

This might have been useful at a time when publishing was paper and reaching others required travel or postage. But now-a-days publishing can be an email, a YouTube video, a LinkedIn post… you get the idea. We don’t have to wait until we have all our ideas bundled together before sharing them with the people who need them. And even if we do want to wait, bundling everything together makes it harder to effectively share our work with others through modern channels.

As such, using the report as a proxy for our work holds us back from making an actual impact.

So I want you to change your mindset. The report is not the product of our work. Insights are the product of our work. And insights are generated throughout the research and evaluation process.

By focusing on insights, our reporting and dissemination process is fundamentally altered. With that in mind, we just need an approach to take these insights and turn them into outcomes. And that’s what I call my Theory of Use.

Building a Simple Theory of Use (Insight, Audience, Outcome)

My Theory of Use is built with three parts.

| 1. Insight | 2. Audience | 3. Outcome |

|---|

Step 1. Identify Your Insights

Think about the importance of research and evaluation questions. They center our work and allow us to choose appropriate methods. Because we know the specific questions that need answering it’s easy to identify where our methods and tools are lacking.

Questions are the atomic unit of evaluation/research design.

In much the same way, insights are the atomic unit of evaluation/research dissemination. If we understand the insights our work has produced, then everything about reporting becomes easier.

So your goal, before you can even think about what dissemination products to choose, is to identify insights. Whether you already have a final technical report or are just starting a new project, here are some places to look for good insights to share.

1. Primary Findings

What the evaluation or research was specifically designed to discover. The answers to the evaluation or research questions.

2. Recommendations

Actionable guidance derived from the findings. Usually directed at the client but potentially relevant to other audiences too.

3. Secondary Findings

Things discovered that weren’t the focus of the original questions but emerged from the data. These can be significant but are rarely disseminated properly.

4. Surprising Findings

Results that contradicted expectations, assumptions, or conventional wisdom. Frequently the most interesting and field-shifting insights. They can be buried because they’re uncomfortable.

5. Negative Findings

What didn’t work, what had no effect, what made things worse. Enormously valuable to the field. Systematically underdisseminated due to client pressure and publication bias.

6. Process Insights

What was learned about how the evaluation or research was conducted. Valuable to organizations building evaluation capacity and to evaluators designing future studies.

7. Methodological Insights

Innovations, adaptations, or lessons about what methods work in what contexts, with what populations, under what conditions. Critical for peers. Almost always buried in methods sections nobody reads.

8. Contextual Insights

What was learned about the setting, community, or system that wasn’t directly about the program or research question but matters for understanding the findings or designing future work.

9. Unknown

What the data contains that hasn’t been fully explored yet. Released for transparency or future discovery.

Step 2. Consider Your Audiences

Insight quality is dependent on the audience. Just like one man’s trash is another man’s treasure, one audience’s insight is another audience’s boring block of text. To keep things simpler I like to use a five audience framework, but you can always get more specific if you have the time.

Audience 1. Client

Every project has someone holding the purse strings — a funder, a boss, a commissioner. They need accountability, completeness, and defensibility. They are almost always your primary audience by default, even if you pretend otherwise.

Audience 2. Community

The people closest to the work — research participants, program staff, partner organizations, community members whose lives and experiences generated your findings. They have a claim on the work that no other audience does, but they’re often the least served.

Audience 3. Influencers

Community leaders, policymakers, decision-makers — the people who could actually act on your findings. They’re busy, skeptical, and driven by decisions they’re already trying to make.

Audience 4. Peers

Other researchers, evaluators, and practitioners doing work like yours. Sharing with this audience contributes to a body of knowledge that makes everyone’s work better.

Audience 5. Public

The broadest and most uncertain audience. Not every project warrants a public audience — and pretending otherwise wastes effort and oversells findings. But when your work genuinely affects people’s lives, this audience deserves a real strategy.

Step 3. Determine Your Target Outcomes

Use means more than simply being reached. Measures like PDF downloads and social media likes seem like vanity metrics, because they’re really just measuring interaction. Outcomes go deeper than that, here are some basic types of reporting outcomes.

1. Awareness Raised

People know something exists or happened that they didn’t know before. The floor of dissemination. Necessary but not sufficient on its own.

2. Questions Answered

People who came with specific questions get meaningful responses. Particularly important for community audiences who have a direct stake in what was found.

3. Mindset Shifted

People see a problem, population, or situation differently. Doesn’t require immediate action but creates the conditions for change later. Often undervalued as an outcome because it’s hard to measure.

4. Behavior Changed

People do something differently as a result of encountering the insight. The most direct form of use but also the hardest to achieve and attribute.

5. Decisions Informed

People in decision-making roles make better choices because of what they learned. Distinct from behavior change because it operates through formal authority rather than individual action.

6. Policy Supported/Challenged

Insights contribute to the development, defense, or disruption of policy at any level. Requires reaching the right people at the right moment in the right form.

7. Theory/Practice Informed

The field learns something that changes how practitioners work or how researchers think about a problem. Slower moving but potentially the most durable form of change.

8. Transparency/Unknown

The data exists in the world, accessible to whoever needs it, for whatever purpose emerges. Honest about the limits of what we can predict or control.

Step 4. Putting the Theory of Use pieces together.

Now let’s pull everything together with a hypothetical program evaluation. Let’s say you are evaluating an after-school literacy program. We always start with the insight, but we’ll also include a source column just so we know exactly where the insight was derived.

| Source | 1. Insight | 2. Audience | 3. Outcome |

|---|---|---|---|

| Student Assessment Data | Students who attended 3+ days/week showed twice the reading growth | Client | Decisions Informed |

| Teacher Interviews | Teachers felt unprepared to support English language learners | Influencers | Policy Supported/ Challenged |

| Parent Survey | Many parents didn’t know how to reinforce literacy at home | Community | Behavior Changed |

| Observation Data | A specific tutoring technique was consistently the most effective | Peers | Theory/Practice Informed |

| Student Assessment Data | Program served mostly high-performing students despite targeting struggling readers | Influencers | Policy Supported/ Challenged |

| Waitlist/Demand Data | Demand far exceeded capacity, suggesting a community-wide literacy crisis | Community | Mindset Shifted |

| Assessment Data | Literacy challenges are widespread across the community, far beyond program capacity | Community | Awareness Raised |

| Program Data | Unknown | Open | Transparency/Unknown |

| Community Focus Groups | Families wanted more communication in their home language | Influencers | Policy Supported/ Challenged |

| Teacher Interviews | Teachers reported burnout as a major barrier to program fidelity | Client | Decisions Informed |

| All Data | Unknown | Public | Awareness Raised |

Step 5. Turning your Theory of Use into a Dissemination Plan

Now here is the cool part. Once you have a theory of use, all you really have to do to create a simple dissemination plan is add a column. For each row, what product would deliver the insight to the audience and potentially bring about the desired outcome?

| Source | 1. Insight | 2. Audience | 3. Outcome | Product |

|---|---|---|---|---|

| Student Assessment Data | Students who attended 3+ days/week showed twice the reading growth | Client | Decisions Informed | Executive Summary |

| Teacher Interviews | Teachers felt unprepared to support English language learners | Influencers | Policy Supported/ Challenged | Policy Brief |

| Parent Survey | Many parents didn’t know how to reinforce literacy at home | Community | Behavior Changed | Illustrated One Pager, Space in the school district’s parent newsletter |

| Observation Data | A specific tutoring technique was consistently the most effective | Peers | Theory/Practice Informed | Journal Article/Blog Post |

| Student Assessment Data | Program served mostly high-performing students despite targeting struggling readers | Influencers | Policy Supported/ Challenged | School Board Presentation |

| Waitlist/Demand Data | Demand far exceeded capacity, suggesting a community-wide literacy crisis | Community | Mindset Shifted | Town Hall Presentation |

| Assessment Data | Literacy challenges are widespread across the community, far beyond program capacity | Community | Awareness Raised | Community Report Card Website |

| Program Data | Unknown | Open | Transparency/ Unknown | Public Dataset |

| Community Focus Groups | Families wanted more communication in their home language | Influencers | Policy Supported/ Challenged | Legislative Brief |

| Teacher Interviews | Teachers reported burnout as a major barrier to program fidelity | Client | Decisions Informed | School Board Presentation |

| All Data | Unknown | Public | Awareness Raised | Social Media Campaign |

Final thoughts.

I designed Theory of Use to complement the evaluation and research frameworks you already use — not replace them. Utilization-Focused Evaluation gets us to intended use by intended users. Developmental Evaluation gets us to real-time learning. The CDC framework gets us to step six. Every major framework we have points toward use and then goes quiet at exactly the moment we need the most guidance.

Theory of Use is what happens next.

If you know of a framework that already does this well, I genuinely want to hear about it. Because I’ve been looking and I haven’t found one. And if this is actually the gap it appears to be, then we have a lot of work to do.

Our work is too important to disappear into a pdf archive. The insights are already there. Let’s get them to the people who need them.

I think this is brilliant! I love it. However, it would be very useful to me if you could do a YouTube video of how you would explain these steps to a person who is not familiar with evaluation. Thank you, Chris!

Yay Chris! This very much aligns with a realization our team had today as we struggled to streamline the end-of-contract evaluation reporting that public health departments are required to submit about their advocacy campaigns. We’ve been trying to balance the desire to lighten the reporting load but increase the utility of the reporting to the projects, and today we realized it should all be about insights. As a result, we whittled everything down to 5 questions as prompts:

I. What was the aim and outcome?

II. Which factors influenced the work?

III. How did the campaign tailor activities to specific populations?

IV. Which activities made the most difference?

V. What should the project do differently next time?

We are so excited to try it out!