Can someone give me the source that spawned the “Randomized Controlled Trials Gold Standard?” I can’t find it, and usually I’m pretty good at finding these kinds of things. I’m not looking for support or opposition. I’m looking for the source.

Can someone name a person who comfortably claims the title “Randomista?” I’ve seen it used to classify the views of others, often hurled like an insult, but I haven’t seen anyone own it. Maybe I’m not paying close enough attention.

I’m no RCT expert, but I’ve seen discussion all over the place lately. The discussion is often filled with rhetoric and jargon. Which, of course, is the perfect breeding ground for cartoons.

My Inspiration

If you want to follow my trail, here is a selection of works (posts/papers) that inspired this post:

Grover J. “Russ” Whitehurst: Does Pre-k Work? It Depends How Picky You Are

Thomas Cook (from 2006): Describing what is Special about the Role of Experiments in Contemporary Educational Research?: Putting the “Gold Standard” Rhetoric into Perspective

Lant Pritchett: An Homage to the Randomistas on the Occasion of the J-PAL 10th Anniversary: Development as a Faith-Based Activity

Chris Blattman (response to Lant Pritchett): The latest in faith-based development: Randomized control trials?

Duncan Green (from last November): Lant Pritchett v the Randomistas on the Nature of Evidence – Is a Wonkwar Brewing?

Last fall I had a little expert help learning more about the topic. The cartoons were a bit more technical: Randomized Controlled Trials, 5 illustration collaboration with Jen Hamilton

RCTs as the Gold Standard

I really expected to quickly find a source for “RCT as the Gold Standard.” If you can source it, let me know and I’ll update the post.

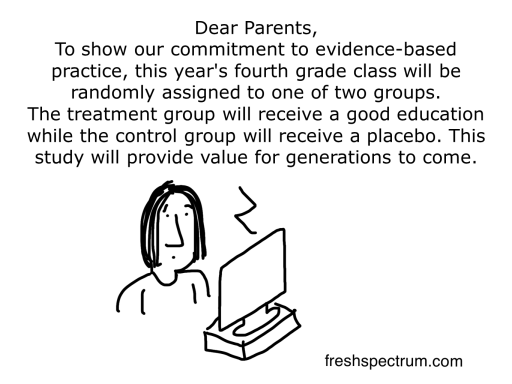

4th Grade RCT

When I was in early childhood I had a boss who would say something like, “well fourth grade is not evidence-based.” We don’t really question whether or not kids should get a fourth grade education, but what if…

Indisputable evidence

Does it work? Should we keep funding? Easy questions right?

Randomista

I’m not a big fan of the term. Feels like fighting rhetoric with rhetoric.

RCT for RCTs

The thing I really don’t like about all the rhetoric is that it clouds over important arguments. AEA has a Research on Evaluation Topical Interest Group, I know they’re thinking about this stuff and remember reading a post about it on John Gargani’s Eval Blog.

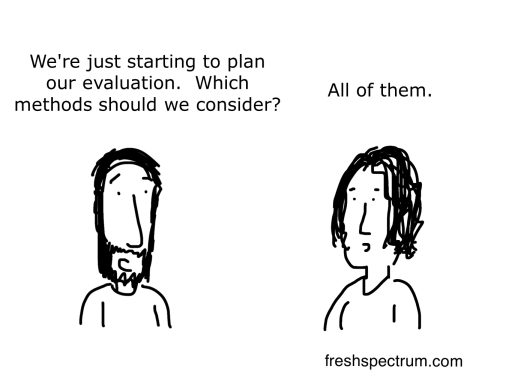

Mixed Methods

There’s no silver bullet method. It’s the only thing I think I know for sure.

The first person I ever heard use the term “randomista” was Jane Davidson. However I’m not sure if she is the creator.

I’ve seen “randomista” in sources from the mid-2000s. It doesn’t bother me as much as the gold standard rhetoric in that at least it’s not claimed to be a standard.

My best guess is that the “gold standard” comes from the field of clinical research, probably in the 1980s. After taking hold in clinical trials, the rhetoric was just generalized to apply to any usage of RCTs regardless of context.

To my knowledge, no institution or association has declared the RCT to be the Gold Standard. The accepted rhetoric though, changes the argument. You either support the RCT as a gold standard or you oppose it. Time and time again you can find the gold standard appearing in academic research, just without any underlying source.

Good question. I found the following interesting sources. Not explicitly using the label ‘gold standard’ but the sentiment is obvious. Many public bodies (in US and UK at least) still employ an ‘evidence hierarchy’ which places certain research designs over others in terms of how confidently a policy maker can use them.

http://www.nice.org.uk/niceMedia/pdf/GDM_Chapter7_0305.pdf

http://ies.ed.gov/ncee/wwc/pdf/reference_resources/wwc_procedures_v2_1_standards_handbook.pdf

Interestingly, the UK Department for International Development published this month a how to note on ‘Assessing strength of evidence’ which explicitly states “This Note is clear that there is no universally applicable hierarchy of research designs and methods.”

https://www.gov.uk/government/uploads/system/uploads/attachment_data/file/291982/HTN-strength-evidence-march2014.pdf

I also came across this from the European Evaluation Association which is has a fantastic reference list for anyone in doubt about the diversity of approaches available. http://europeanevaluation.org/sites/default/files/EES%20Statement_0.pdf

Thanks for all the resources Simon.

Here is what I’ve learned with some help from Alex Sutherland.

A doctor by the name of Harry Gold was instrumental in developing the blind RCT for the field of medicine in the late 1950s and 1960s.

From page 542 of the following article.

“As the blind RCT became more common in the late 1950s and 1960s, researchers often adopted Gold’s validation approach for demonstrating the new method’s objectivitiy.”

The double-blind, randomized, placebo-controlled trial:

Gold standard or golden calf?“>

If you look at research papers written by Harry Gold in that time period, he did not use the term control group. He talked about a “treatment group” and a “standard” group.

It may just be a coincidence, but if so it’s uncanny. The “Gold Standard” could have been coined by talking about Harry Gold’s Standard. Or, in other words, the control group in a randomized blind study.

It seems pretty likely that the Gold Standard pre-dates the field of evaluation, at least it pre-dates the development of the vast array of methods we employ today.

Found in a book: It‘s All About MeE: Using

Structured Experiential

Learning (“e”) to Crawl the

Design Space by

Lant Pritchett, Salimah Samji,

and Jeffrey Hammer

“This term can be attributed to Angus Deaton (2009) and expresses the view that randomization has

been promoted with a remarkable degree of intensity.”

The refence to Deaton is the following:

Instruments of development: Randomization in the tropics, and the search for the elusive keys to economic development

Angus S. Deaton

NBER Working Paper No. 14690

Issued in January 2009

Have a good day, hope it helps

Thanks Gilles,

Angus S. Deaton does appear to be the source for the current usage of Randomista. Earliest reference I see is from an October 2008 lecture by Deaton to the British Academy (http://rupertsimons.blogspot.com/2008/10/deaton-on-randomistas.html) https://www.britac.ac.uk/events/2008/keynes.cfm

Thanks for this excellent post Chris. I especially love these cartoons. Unfortunately, there ARE institutions that DO (still) declare the RCT to be the “gold standard.” To list just a few very influential institutions:

– The U.S. Department of Education Institute of Educational Sciences:

“… educational practices that have been proven effective in randomized controlled trials—research’s gold standard for establishing what works …” http://ies.ed.gov/director/board/res5.asp

“As illustrative examples of the potential impact of evidence-based interventions on educational outcomes, the following have been found to be effective in randomized controlled trials – research’s ‘gold standard’ for establishing what works…”; and “Well-designed and implemented randomized controlled trials are considered the ‘gold standard’ for evaluating an intervention’s effectiveness, in fields such as medicine, welfare and employment policy, and psychology.”

Coalition for Evidence-based Policy (CEBP). (2003). Identifying and implementing educational practices supported by rigorous evidence: A user friendly guide. Washington, DC: U.S. Dept. of Education, Institute of Education Sciences, National Center for Education Evaluation and Regional Assistance.

http://www2.ed.gov/rschstat/research/pubs/rigorousevid/rigorousevid.pdf

– The National Research Council:

“The number of preventive interventions tested using randomized controlled trials (RCTs), an approach generally considered to be the ‘gold standard’ and strongly recommended by the 1994 IOM report, has increased substantially since that time…” (p. 21); and “…RCTs remain the gold standard…” (p. 26)

National Research Council. Preventing Mental, Emotional, and Behavioral Disorders Among Young People: Progress and Possibilities. Washington, DC: The National Academies Press, 2009.

http://www.nap.edu/catalog.php?record_id=12480

– The Abdul Latif Jameel Poverty Action Lab (J-PAL):

“Randomized evaluations are often deemed the gold standard of impact evaluation, because they consistently produce the most accurate results.”

http://www.povertyactionlab.org/methodology/what-randomization

– The Campbell Collaboration

These are just a few that use the “gold standard” term; others use different terms now like “top tier evidence” which has the same hierarchizing effect. I agree that the anti-RCT rhetoric gets a bit rabid at times (I am guilty of that myself at times), but these examples of powerful institutions that come right out and call RCTs the “gold standard” perhaps justify some of that.

For a really interesting discussion of the gold standard origins in medicine, see:

Timmermans, S., & Berg, M. (2003). The gold standard: The challenge of evidence-based medicine and standardization in health care. Philadelphia, Pa: Temple University Press.

Thanks again for this post,

Tom

Thanks Tom,

Are considered, generally considered, often deemed…

This is my problem. Any one of these places could say, we proclaim that the RCT is the gold standard because _____

But they don’t, the language always hints that it is already a standard so of course they support its use. It seems like the standard predates the field of evaluation. It’s a standard because it’s a standard…

It also seems like gold standard didn’t mean the same thing in its original context.

I agree with you on all counts. In everyday speech, the “gold standard” just refers to the best of something. In the context of evaluation and social science research, the “gold standard” moniker is taken to be self-evident by many who use it favorably (a wonderful and ironic example of circular reasoning), which helps to veil the tacit assumptions and epistemological politics the term contains.

On context, one of Scriven’s (and others’) most scathing critiques of the RCT as gold standard relates to the contextual differences between the term’s origins in medicine and its newer home in social science. Scriven claims that the RCT has “essentially zero practical application to the field of human affairs,” especially because it is almost impossible to have a truly single-blind (let alone double-blind) study in social research; RCTs in education are “zero-blind.” Scriven also points out that “the real ‘gold standard’ for causal claims is the same ultimate standard as for all scientific claims; it is critical observation.”

http://journals.sfu.ca/jmde/index.php/jmde_1/article/view/160/186

(It is worth noting that a few years earlier, Scriven, in his Claremont debate with Mark Lipsey, expressed a more moderate view of the RCT’s utility: “This is a very powerful tool, and sometimes much the best tool, but it has as the same value as the torque wrench in a good mechanic’s toolbox. For certain tasks, you can’t beat it.”)

http://journals.sfu.ca/jmde/index.php/jmde_1/article/view/101/116

Thanks Tom, my comments section now has more useful resources than the vast majority of my blog posts put together 🙂

Adding to Tom’s list another US Government source: The USAID Evaluation Policy (2011) includes the following statement concerning impact evaluations (as contrasted with performance evaluations):

“• Impact evaluations measure the change in a development outcome that is attributable to a defined intervention; impact evaluations are based on models of cause and effect and require a credible and rigorously defined counterfactual to control for factors other than the intervention that might account for the observed change. Impact evaluations in which comparisons are made between beneficiaries that are randomly assigned to either a ?treatment? or a ?control? group provide the strongest evidence of a relationship between the intervention under study and the outcome measured.”

Thanks for the addition Jim 🙂

Great post! Although I don’t know the source of the “Gold Standard” I think you may find this article useful – “Parachute use to prevent death and major trauma related to gravitational challenge: systematic review of randomised controlled trials” by Gordon Smith and Jill Pell (http://www.bmj.com/content/327/7429/1459).

I like it, thanks for sharing Robyn!

Fascinating discussion, especially the possible origins of the Gold Standard terminology.

The good news is that the URL GoldStandardEvaluation.com has not been commandeered by the randomistas.

And neither has PlatinumStandardEvaluation.com, for that matter! The latter needs a redirect; will inform the domain name owner. Rest assured it is in capable hands. ;->

Jane

I like it Jane 🙂

Next step, move beyond the redirect and create a specific landing page.

Chris,

I’m not completely sure if you’re seeking the source for the term The Gold Standard or for the concept of RCTs being the standard. I might have a bit of info on the latter.

I believe that a lot of the discussion in recent (say the last 10) years in evaluation around RCTs being the gold standard stems from the 2005 notice on Scientifically Based Evaluation Methods that stated:

“…the Secretary considers random assignment and quasi-experimental designs to be the most rigorous methods to address the question of project effectiveness. ”

Yep, at that time it looks like the Secretary of Ed owned it! AEA wrote a response to the proposed priority. Here’s the resources puled together by me for AEA at that time, including the priority, AEA’s response, and other association’s responses http://www.eval.org/p/cm/ld/fid=83.

Not sure if this gets you were you’re hoping to go, but perhaps another brick in the Yellow Brick Road, or Another Brick in the Wall (pick your pop metaphor).

Susan

This is fantastic Susan, thank you 🙂

Agree or disagree with the standard, I’m happier to see someone own it than not. I wish it was owned or sourced every time the term was used, but alas.

The origins are most certainly unclear. While I think it could plausibly be related to Harry Gold’s Standard, I don’t think people really started to use the term the way we use it now until the 80s. I think it took a little while after the actual “Gold Standard” was taken away in 71 before it saw broader use. My guess is that’s when it started, and likely in the field of medicine.

But I haven’t done enough research or found a true origin. When it comes down to it, RCTs are based on the creation of standards. Who knows, it could have started because of a simple word association.

I believe you are looking a little too narrowly at this notion that the phrase “gold standard” and RCT are uniquely related. The descriptor “a gold standard” comes from our use of the metal gold as the standard for backing the value of currency. Most of the world was on one version of another of “the Gold Standard” for centuries and it has widely come to mean the highest standard for a thing. Enter “the gold standard for” into Google and the autofill will give you the gold standard for many things. RCT is the most rigorous form of social science design simply because it is the only design that eliminates unobservable characteristics that could influence the results of a study. There’s nothing magic about it. Tom Cook and others have shown that there are possible designs that can replicate RCT results without randomization, but they are generally more complex than randomizing. Observation is surely a powerful tool for understanding why something works or doesn’t, but not a great tool for assessing whether it works because we aren’t very good at controlling out confounders via observation. When a social intervention appears effective and we move it elsewhere, scale it up, wait 5 years, etc, they typically aren’t as effective the 2nd time. They can’t be replicated because we failed to identify characteristics in the initial program that were contributing to the success. Randomization helps enormously to solve that problem. Ignore the hype on all sides. Its just a tool.

I am intentionally looking at it narrowly. I believe the RCT is specifically tied to the Gold Standard Rhetoric. It’s a global meme and something that Tom Cook’s referred to as rhetoric. It leads to a hierarchical classification that pushes the method even when its use would be inappropriate.

I think we make a whole bunch of assumptions when talking about the merit of RCTs as the “Gold Standard.” Here’s the thing, whenever an expert methods person looks at it they say that RCTs are really useful in certain situations. But they pretty much always say something like, they are not the only valid method and are not always the most appropriate.

I’m with you, it’s just a tool and it has its uses. But it’s a tool that sits on a global pedestal, put there by un-sourced rhetoric and not by any method we would support as evaluators.

Lance says “RCT is the most rigorous form of social science design simply because it is the only design that eliminates unobservable characteristics that could influence the results of a study. ” But RCT does not eliminate unobservables. Those using RCT hope that by being “random enough” in assigning a sufficient number of subjects to two or more different groups the “unobservables” will somehow be equally enough distributed among the groups so as to render any possible differential effect on outcomes caused by those unobservables to be unlikely.